What are biological neural networks and artificial neural networks? Threshold logic unit, perceptron, propagation networks and applications of neural networks.

Antes de comenzar la lectura del blog quiero decirles que gran parte del conocimiento aplicado, incluso las imágenes, son sacadas de este libro, por si lo quieren adquirir:

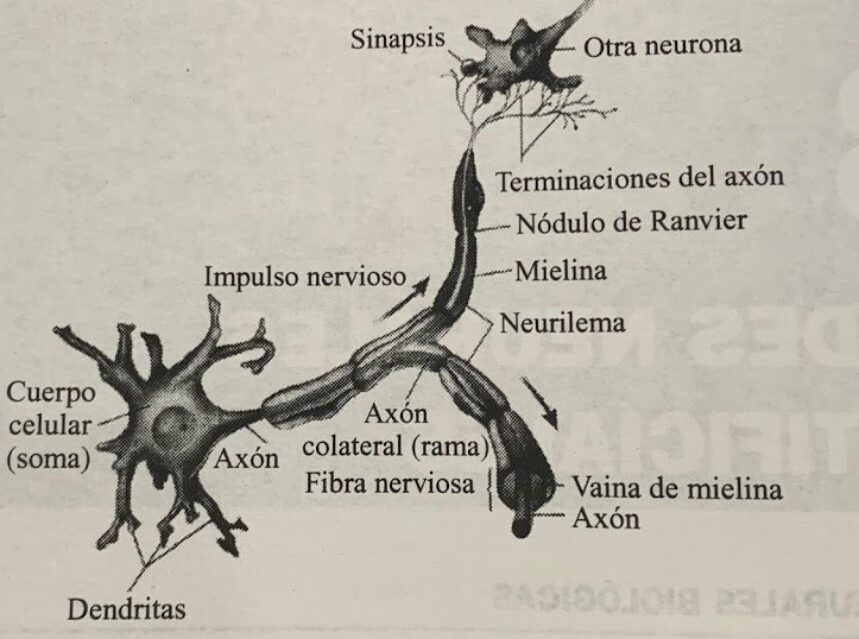

Redes neuronales biológicas:

funcionan con procesos neurobiologicos que se establecen relaciones de alta complejidad ya que funcionan con electricidad van intercambiando electricidad de una neurona a otra.

esta compuesta por el cuerpo de la célula llamada soma y dos tipos de ramificaciones, el Axón y las dendritas, las neuronas reciben impulsos eléctricos de otras neuronas a través de la dendrita, y transmiten señales por el cuerpo de la célula a través del axón, el cuerpo y las dendritas reciben las señales de entrada y el cuerpo celular las combina e integra y emite las señales de salida.

Redes neuronales artificiales:

las redes neuronales artificiales emulan una parte del funcionamiento del cerebro humana, así que su principal diferencia es que las artificiales sólo tratan de imitar el comportamiento de una neurona biológica.

Pueden ser de una sola capa o multicapa, esta es multicapa, tiene unas capas de entrada

que son las que reciben la información del exterior, luego tienen una capa oculta donde se procesan los datos, son las que transforman la entrada que la unidad podría usar, luego la capa de salida que son los posibles resultados.

Unidad lógica de umbral:

Las Ulus son las que comparan la suma ponderada de las entradas con el umbral, si lo supera como resultado da 1 de lo contrario da 0.

Entrenamiento:

Una solo ulu es conocida como perceptrón, el entrenamiento consiste en ajustar los pesos de variables hasta que se consigan salidas deseadas, además consiste en minimizar una función de error, una de las más usadas es la del error cuadrática, necesitamos que sus entradas sean numéricas si es 1, significa que el producto escalar es mayor que el umbral si no va ser 0.

Perceptrón

Es cada ulu y tiene una linealidad de funcionalidad no binaria que se pueden separar.

EJEMPLO DE UNA RED NEURONAL:

Tres tipos de capa: Capas de entrada, capas ocultas y capas de salidas.

En las capas de entrada tenemos un paciente medico con sus ciertos valores médicos y en las capas de salida vemos si tiene un riesgo alto de sufrir una enfermedad o riesgo bajo de no tenerla.

Redes de propagación hacia adelante:

Son grafos que no poseen bucles que son estáticos y sólo producen un conjunto de valores como resultado.

Retroalimentación:

Estos si poseen bucles debido a conexiones de retroalimentación

Redes de propagación hacia atras:

Se aplica un patron de entrada para la primera capa de la red y se propaga a todas las capas superiores y genera una salida despúes se calcula el error para cada neurona de salida.

APLICACIONES:

Medicina: analisis de celulas portadoras del cancer mamario

Voz: Asistentes de voz (Siri, Alexa de amazon)

Seguridad: reconocimiento de huellas digitales

Bancos: Lecturas de cheques

Automoviles: sistema de piloto automático